Company Details

- Address

- Seattle, Wa 98125, Us

- Industry

- Technology, Information And Internet

- Website

- https://inferx.net

- Keywords

- Platform.

- HQ

- Seattle, WA

Please complete the CAPTCHA to continue

Find emails, phones & company data instantly

Your AI prospecting assistant

0 records × $0.02 per record

Secure checkout

Total price

$0.50

Enter your payment details below to complete the download. We'll email you the CSV as soon as it's ready.

Processing your payment...

Please wait while we complete your transaction

Sign in required

Create a free account or sign in to continue downloading the full results.

Sign up for a free account. No credit card required. Up to 10 free credits.

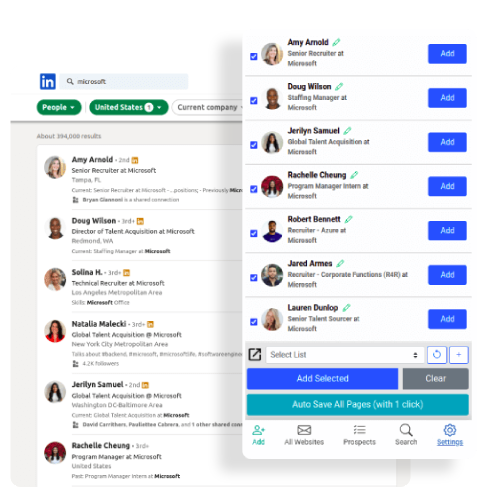

Get contact details of over 750M+ profiles across 60M companies – all with industry-leading accuracy. Sales Navigator and Recruiter users, try out our Email Finder Extension.

Find business and personal emails and mobile phone numbers with exclusive coverage across niche job titles, industries, and more for unparalleled targeting. Also available via our Contact Data API.