N Nikhila Email and Phone Number

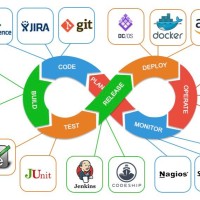

Having 10+ years of professional IT experience in Cloud Computing, CI/CD (Continuous Integration/Continuous Deployment) process, with strong background in Linux/Unix Administration, Build and Release Management and Cloud Implementation.• Created, tested, and deployed an End-to-End CI/CD pipeline for various applications using Jenkins as the main Integration server for Dev, QA, Staging, UAT and Prod Environments with Agile methodology.• Experienced in AWS Cloud Services such as IAM, EC2, S3, AMI, VPC, Auto-Scaling, Security Groups, Route53, ELB, EBS, RDS, SNS, SQS, CloudWatch, CloudFormation, CloudFront, Snowball and Glacier.• Experienced in optimizing volumes with EC2 instances, creating multiple VPC instances and deployed those applications on AWS using Elastic Beanstalk and set up Route53 for AWS Web Instances.•Configured AWS Identity and Access Management (IAM) Groups and Users for improved login authentication, provided policies to groups using policy generator and set up different permissions based on the requirement along with providing Amazon Resource Name (ARN). •Experienced in the deployment of a serverless applications using AWS CloudFormation templates, configuring AWS Lambda functions, Amazon API Gateway, and related resources.•Created Cloud Formation Templates for different environments (DEV/stage/prod) to automate infrastructure for ELB, Cloud Watch alarms, SNS, and RDS services.•Written Templates for Azure Infrastructure as code using Terraform to build staging and production environment and created reusable Terraform modules in both Azure and AWS cloud environments.•Experience with Microsoft Azure Cloud Services (PaaS & IaaS) – Azure Key Vault, Application Insights, App Services, Express Route, Traffic Manager, VPN, Load Balancing.•Experienced in using Azure Key Vault Service to generate, import, and store cryptographic keys used to encrypt and decrypt data, providing a secure way to manage keys for applications and services.•Experienced in Google Cloud Platform (GCP) Services like Compute Engine, Cloud Functions, Cloud DNS, Cloud Storage, Cloud Deployment Manager and SaaS, PaaS and IaaS concepts of Cloud Computing and Implementation using GCP.•Experienced in working with GCP Cloud Fire store NoSQL database in creating Indexes and Database Collections using terraform scripting for setting up a workday employer using those collections.•Experience with Python, Bash, PowerShell, YAML, and Groovy scripting languages and Developed Shell and Python Scripts to automate day to day administrative tasks for build and release process.

Fannie Mae

View-

Sr. Cloud/Devops EngineerFannie Mae Aug 2022 - PresentReston, Virginia, United States•Involved in designing, configuring, and managing public cloud infrastructures utilizing Amazon Web Services (AWS) including EC2, Auto-Scaling, Elastic Load Balancer, Code Build, Elastic Beanstalk, S3, Lambda, Glacier, cloud Front, RDS, VPC, Direct Connect, Route53, cloud Watch, cloud Formation, IAM and SNS. •Configured & deployed Java applications on Amazon Web Services (AWS) for a multitude of applications utilizing the AWS stack, cloud formation and managed cloud VMs with AWS EC2 command line clients and AWS management console.•Whitelisted the IP Addresses in AWS EC2 Instances to allow access for MSSQL Database and VM Instances through Bastion host in all environments.• Integrated Amazon Cloud Watch with Amazon EC2 instances for monitoring the log files, store them and track the metrics.•Involved with Microsoft Azure cloud to provide IaaS support to client and created custom virtual machine (instances) though PowerShell scripts and Azure portal.•Involved in using Azure Services like web app and function app to implement azure deployment slots reducing the minimal interruptions and downtime with databased and app config updates.•Migrated moderate workloads from on premise to Azure IaaS and published web service API’s using Azure API management service and provided high availability for IaaS instances and PaaS role instances for access from other services.•Managed Azure Services like Key Vault for storing the keys and secret values and configured alerts in Application Insights for getting the email notifications involving any error queries related to 5xx, 401.•Used Azure DevOps Pipelines for continuous integration and continuous deployment for automating the build, test, and deployment of applications which supports various programming languages and platforms that can be used to deploy code to Azure or other cloud providers.

Sr. Cloud/Devops EngineerFannie Mae Aug 2022 - PresentReston, Virginia, United States•Involved in designing, configuring, and managing public cloud infrastructures utilizing Amazon Web Services (AWS) including EC2, Auto-Scaling, Elastic Load Balancer, Code Build, Elastic Beanstalk, S3, Lambda, Glacier, cloud Front, RDS, VPC, Direct Connect, Route53, cloud Watch, cloud Formation, IAM and SNS. •Configured & deployed Java applications on Amazon Web Services (AWS) for a multitude of applications utilizing the AWS stack, cloud formation and managed cloud VMs with AWS EC2 command line clients and AWS management console.•Whitelisted the IP Addresses in AWS EC2 Instances to allow access for MSSQL Database and VM Instances through Bastion host in all environments.• Integrated Amazon Cloud Watch with Amazon EC2 instances for monitoring the log files, store them and track the metrics.•Involved with Microsoft Azure cloud to provide IaaS support to client and created custom virtual machine (instances) though PowerShell scripts and Azure portal.•Involved in using Azure Services like web app and function app to implement azure deployment slots reducing the minimal interruptions and downtime with databased and app config updates.•Migrated moderate workloads from on premise to Azure IaaS and published web service API’s using Azure API management service and provided high availability for IaaS instances and PaaS role instances for access from other services.•Managed Azure Services like Key Vault for storing the keys and secret values and configured alerts in Application Insights for getting the email notifications involving any error queries related to 5xx, 401.•Used Azure DevOps Pipelines for continuous integration and continuous deployment for automating the build, test, and deployment of applications which supports various programming languages and platforms that can be used to deploy code to Azure or other cloud providers. -

Sr. Azure Cloud EngineerCvs Health Jun 2020 - Jul 2022Hartford County, Connecticut, United StatesAs an Azure Cloud Engineer, I will be migrating the data from on-prem to Azure cloud platform where we come across all the cloud services provided and work closely with the infra team for getting the code to create Yaml file for CI deployment and then pushing that to sonar cube for code quality scans. •Established connection from Azure to On-premises datacentre using Azure ExpressRoute for Single and Multi-subscription connectivity.•Deployed Azure laaS virtual machines (VMs) and Cloud services (PaaS role instances) into secure VNets and subnets for deploying applications on multiple web servers, and maintained Load balancing, high availability by using Azure platform.•Designed and implemented Azure Cloud Infrastructure using ARM templates, runbooks, automation, and provisioning process.•Used Azure Security Groups (ASG) which is a network security feature in Azure that allows us to filter network traffic to and from Azure resources in a virtual network.•Involved in designing release pipelines with Azure DevOps for Release Management, enabling consistent and reliable automated deployments across multiple environments.•Built Azure DevOps pipeline jobs to create Azure infrastructure from GitHub repositories containing Terraform code and administered them for managing weekly builds.•Leveraged Terraform modules to standardize the creation of Azure virtual networks, VMs, and storage resources, resulting in faster and more reliable deployments.•Created Docker images using a docker file, worked on Docker container snapshots, removing images, and managing Docker volumes for virtualized servers in Docker as per QA and Dev-environment requirements.•Worked on Docker-Compose, Docker-Machine to create Docker containers for testing applications in the QA environment and automated the deployments, and management of containerized applications.

Sr. Azure Cloud EngineerCvs Health Jun 2020 - Jul 2022Hartford County, Connecticut, United StatesAs an Azure Cloud Engineer, I will be migrating the data from on-prem to Azure cloud platform where we come across all the cloud services provided and work closely with the infra team for getting the code to create Yaml file for CI deployment and then pushing that to sonar cube for code quality scans. •Established connection from Azure to On-premises datacentre using Azure ExpressRoute for Single and Multi-subscription connectivity.•Deployed Azure laaS virtual machines (VMs) and Cloud services (PaaS role instances) into secure VNets and subnets for deploying applications on multiple web servers, and maintained Load balancing, high availability by using Azure platform.•Designed and implemented Azure Cloud Infrastructure using ARM templates, runbooks, automation, and provisioning process.•Used Azure Security Groups (ASG) which is a network security feature in Azure that allows us to filter network traffic to and from Azure resources in a virtual network.•Involved in designing release pipelines with Azure DevOps for Release Management, enabling consistent and reliable automated deployments across multiple environments.•Built Azure DevOps pipeline jobs to create Azure infrastructure from GitHub repositories containing Terraform code and administered them for managing weekly builds.•Leveraged Terraform modules to standardize the creation of Azure virtual networks, VMs, and storage resources, resulting in faster and more reliable deployments.•Created Docker images using a docker file, worked on Docker container snapshots, removing images, and managing Docker volumes for virtualized servers in Docker as per QA and Dev-environment requirements.•Worked on Docker-Compose, Docker-Machine to create Docker containers for testing applications in the QA environment and automated the deployments, and management of containerized applications. -

Devops SreTruist Aug 2018 - May 2020Charlotte, North Carolina, United StatesAs a DevOps SRE I will be maintaining Azure and GCP cloud resources and will be involving in continuous integration and continuous deployment process using Jenkins. In my team we will mainly deal with loading the employer configurations into workday server and provide the clients with their information and monitor them using fire store database. By using GCP cloud we will be creating resources to store client’s information.•Involved in managing the GCP services such as Compute Engine, App Engine, Cloud Storage, VPC, Load Balancing, Firewalls, Stack Driver and involved in Migrating the Legacy application into GCP Platform.•Involved in setting up GCP Firewall rules to secure the infrastructure by allowing or denying traffic to and from the VM’s instance, used GCP cloud CDN (content delivery network) to deliver content from GCP cache locations thereby drastically improving user experience and latency.•Extensively used Google stack-driver for monitoring the logs of both GKE and GCP instances and configured alerts from Stack-driver for some application services in all environments.•Implemented terraform modules and scripts for automatic provisioning in GCP environment for cloud resources and responsible for creating & managing the environments for Multiple projects.•Configured Azure Multi-Factor Authentication (MFA) as a part of Azure AD Premium to securely authenticate users and worked on creating custom Azure templates for quick deployments and deployed Azure SQL DB with GEO Replication.•Used Azure Container Instances (ACI) service which enables to deploy and run containers without having to manage the underlying infrastructure and virtual machines or orchestrators.•Created google cloud storage bucket using terraform scripting modules to store the data related to applications and used that data to import/export to different environments.

Devops SreTruist Aug 2018 - May 2020Charlotte, North Carolina, United StatesAs a DevOps SRE I will be maintaining Azure and GCP cloud resources and will be involving in continuous integration and continuous deployment process using Jenkins. In my team we will mainly deal with loading the employer configurations into workday server and provide the clients with their information and monitor them using fire store database. By using GCP cloud we will be creating resources to store client’s information.•Involved in managing the GCP services such as Compute Engine, App Engine, Cloud Storage, VPC, Load Balancing, Firewalls, Stack Driver and involved in Migrating the Legacy application into GCP Platform.•Involved in setting up GCP Firewall rules to secure the infrastructure by allowing or denying traffic to and from the VM’s instance, used GCP cloud CDN (content delivery network) to deliver content from GCP cache locations thereby drastically improving user experience and latency.•Extensively used Google stack-driver for monitoring the logs of both GKE and GCP instances and configured alerts from Stack-driver for some application services in all environments.•Implemented terraform modules and scripts for automatic provisioning in GCP environment for cloud resources and responsible for creating & managing the environments for Multiple projects.•Configured Azure Multi-Factor Authentication (MFA) as a part of Azure AD Premium to securely authenticate users and worked on creating custom Azure templates for quick deployments and deployed Azure SQL DB with GEO Replication.•Used Azure Container Instances (ACI) service which enables to deploy and run containers without having to manage the underlying infrastructure and virtual machines or orchestrators.•Created google cloud storage bucket using terraform scripting modules to store the data related to applications and used that data to import/export to different environments. -

Aws Devops EngineerFidelity Investments Jun 2016 - Jul 2018Boston, Massachusetts, United States•Automating AWS components like EC2 instances, Security groups, ELB, RDS, IAM through AWS Cloud formation templates.•Worked on setting up a Virtual Private cloud (VPC), Network ACLs, Security groups and route tables across Amazon Web Services and administrated Load Balancers (ELB), Route53, Network and Auto Scaling Groups for high availability.•Performed the creation of Flow logs on VPC for monitoring of built VPCs, created IAMs and built EC2 instances for providing firewall rules for security groups (SG) and Network Access Control List (NACL).•Managed AWS EC2 instances utilizing Auto Scaling, Elastic Load Balancing and Glacier for our QA and UAT environments and as well as managed infrastructure servers for GIT and Chef. •Used Terraform to customize our infrastructure on AWS, configured various AWS resources for building production infrastructure using the repeatable designs using Terraform.•Managed Clusters using Kubernetes and worked on creating many pods, replication controllers, services, deployments, labels, health checks.•Created a script to run SonarQube in the GitLab CI/CD pipeline for providing static analysis of the code coverage.•Involved in providing consistent environment using Kubernetes for deploying and scaling the application from development through production, easing the code development and deployment pipeline by implementing Docker containerization.•Integrated Kubernetes with network, storage, and security to provide comprehensive infrastructure and orchestrated container across multiple hosts.•Integrated Jenkins with Docker container using Cloud bees Docker pipeline plugin to drive all micro services builds out to the Docker Registry and then deployed to Kubernetes.•Worked with Linux systems, virtualization in a large-scale environment and used Docker in Environment variables, Configuration files, and Strings.

Aws Devops EngineerFidelity Investments Jun 2016 - Jul 2018Boston, Massachusetts, United States•Automating AWS components like EC2 instances, Security groups, ELB, RDS, IAM through AWS Cloud formation templates.•Worked on setting up a Virtual Private cloud (VPC), Network ACLs, Security groups and route tables across Amazon Web Services and administrated Load Balancers (ELB), Route53, Network and Auto Scaling Groups for high availability.•Performed the creation of Flow logs on VPC for monitoring of built VPCs, created IAMs and built EC2 instances for providing firewall rules for security groups (SG) and Network Access Control List (NACL).•Managed AWS EC2 instances utilizing Auto Scaling, Elastic Load Balancing and Glacier for our QA and UAT environments and as well as managed infrastructure servers for GIT and Chef. •Used Terraform to customize our infrastructure on AWS, configured various AWS resources for building production infrastructure using the repeatable designs using Terraform.•Managed Clusters using Kubernetes and worked on creating many pods, replication controllers, services, deployments, labels, health checks.•Created a script to run SonarQube in the GitLab CI/CD pipeline for providing static analysis of the code coverage.•Involved in providing consistent environment using Kubernetes for deploying and scaling the application from development through production, easing the code development and deployment pipeline by implementing Docker containerization.•Integrated Kubernetes with network, storage, and security to provide comprehensive infrastructure and orchestrated container across multiple hosts.•Integrated Jenkins with Docker container using Cloud bees Docker pipeline plugin to drive all micro services builds out to the Docker Registry and then deployed to Kubernetes.•Worked with Linux systems, virtualization in a large-scale environment and used Docker in Environment variables, Configuration files, and Strings. -

Build And Release EngineerTech Mahindra May 2013 - May 2016Hyderabad, Telangana, India●Designed, developed, and implemented new methods and procedures for technical solutions that meets the requirements of the project, and these designs may involve major and highly complex systems.●Worked on tools migration from old tools like PVCS version control system to SVN, Tracker to Jira and finally CI tool Hudson to Jenkins.●Installed, Configured, and administrated Apache Web server, Tomcat server in RHEL. Developed and Maintained UNIX Scripts for Build and Release tasks. ●Responsible for Defining Mapping parameters, variables, and Session parameters according to the requirements and usage of workflow variables for triggering emails in QA and UAT environments.●Worked on using a Git branching strategy that included DevOps branches, feature branches, staging branches, and master. Pull requests and code reviews were performed.●Involved in system administration, server builds, migration, troubleshooting, security, backup, disaster recovery, performance monitoring and fine tuning on Red Hat Linux systems, and Windows.●Installed and configured VMware ESX server instances for virtual server setup and deployment. And Responsible for creating VMware virtual guests running Solaris, Linux, Windows.●Worked with Bash, shell scripts to monitor system resources and system maintenance. Also Wrote Perl and Python scripts to generate statistics and monitor processes.●Installed and configured Nexus Repository Manager to distribute build artifacts in the organization.ENVIRONMENT: Python, R, JIRA, Ansible, Pyspark, Oracle, Service Now, Spring MVC, Restful, RPM

Build And Release EngineerTech Mahindra May 2013 - May 2016Hyderabad, Telangana, India●Designed, developed, and implemented new methods and procedures for technical solutions that meets the requirements of the project, and these designs may involve major and highly complex systems.●Worked on tools migration from old tools like PVCS version control system to SVN, Tracker to Jira and finally CI tool Hudson to Jenkins.●Installed, Configured, and administrated Apache Web server, Tomcat server in RHEL. Developed and Maintained UNIX Scripts for Build and Release tasks. ●Responsible for Defining Mapping parameters, variables, and Session parameters according to the requirements and usage of workflow variables for triggering emails in QA and UAT environments.●Worked on using a Git branching strategy that included DevOps branches, feature branches, staging branches, and master. Pull requests and code reviews were performed.●Involved in system administration, server builds, migration, troubleshooting, security, backup, disaster recovery, performance monitoring and fine tuning on Red Hat Linux systems, and Windows.●Installed and configured VMware ESX server instances for virtual server setup and deployment. And Responsible for creating VMware virtual guests running Solaris, Linux, Windows.●Worked with Bash, shell scripts to monitor system resources and system maintenance. Also Wrote Perl and Python scripts to generate statistics and monitor processes.●Installed and configured Nexus Repository Manager to distribute build artifacts in the organization.ENVIRONMENT: Python, R, JIRA, Ansible, Pyspark, Oracle, Service Now, Spring MVC, Restful, RPM

N Nikhila Education Details

-

Master'S Degree

Master'S Degree

Frequently Asked Questions about N Nikhila

What company does N Nikhila work for?

N Nikhila works for Fannie Mae

What is N Nikhila's role at the current company?

N Nikhila's current role is Sr. Cloud/DevOps Engineer at Fannie Mae | AWS | Azure | GCP | Python | Terraform | Jenkins | Docker | Kubernetes.

What schools did N Nikhila attend?

N Nikhila attended University Of New Haven.

Not the N Nikhila you were looking for?

Free Chrome Extension

Find emails, phones & company data instantly

Download 750 million emails and 100 million phone numbers

Access emails and phone numbers of over 750 million business users. Instantly download verified profiles using 20+ filters, including location, job title, company, function, and industry.

Start your free trial